Search results

Writing dynamic SQL queries using Spring Data JPA repositories and EntityManager

1. OVERVIEW

You would need to write dynamic SQL queries for instance, if you need to implement a RESTful endpoint like:

/api/films?category=Action&category=Comedy&category=Horror&minRentalRate=0.5&maxRentalRate=4.99&releaseYear=2006

where the request parameters category, minRentalRate, maxRentalRate, and releaseYear might be optional.

The resulting SQL query’s WHERE clause or even the number of table joins change based on the user input.

One option to write dynamic SQL queries in your Spring Data JPA repositories is to use Spring Data JPA Specification and Criteria API.

But Criteria queries are hard to read and write, specially complex queries. You might have tried to come up with the SQL query and reverse-engineer it to implement it using the Criteria API.

There are other options to write dynamic SQL or JPQL queries using Spring Data JPA.

This tutorial teaches you how to extend Spring Data JPA for your repositories to access the EntityManager so that you can write dynamic native SQL or JPQL queries.

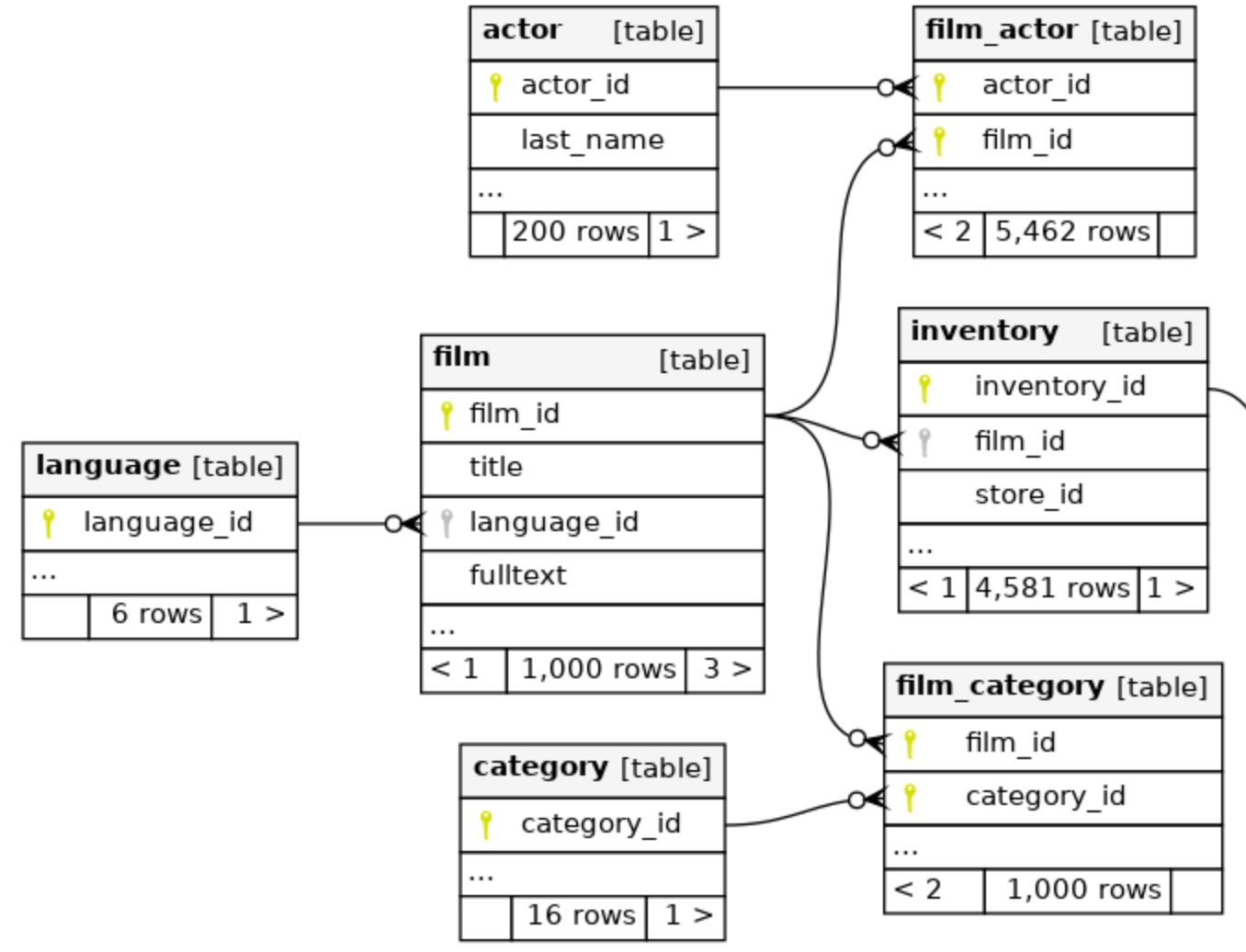

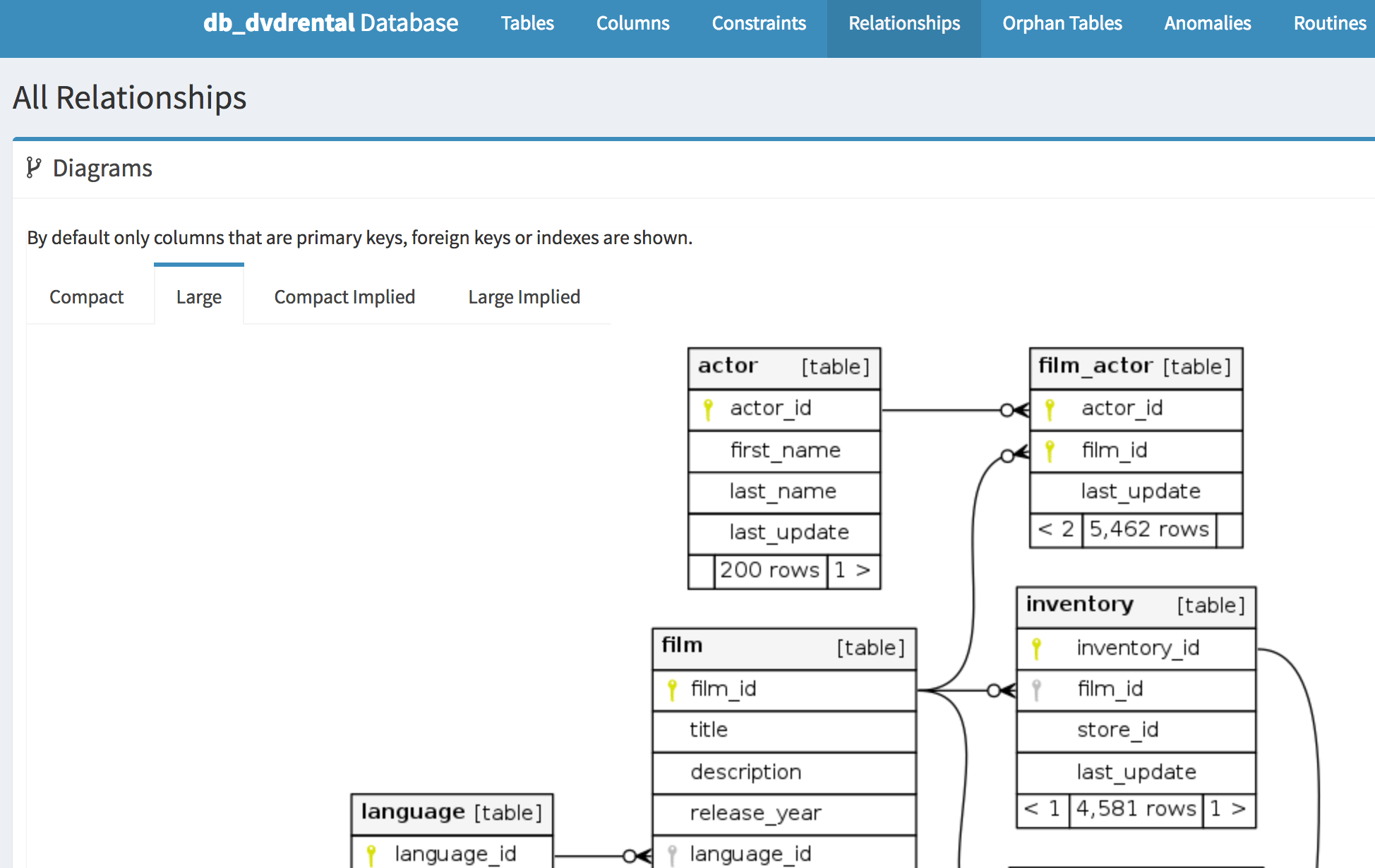

Let’s start with a partial ER diagram for the db_dvdrental relational database:

Fixing Hibernate HHH000104 firstResult maxResults warning using Spring Data JPA Specification and Criteria API

1. OVERVIEW

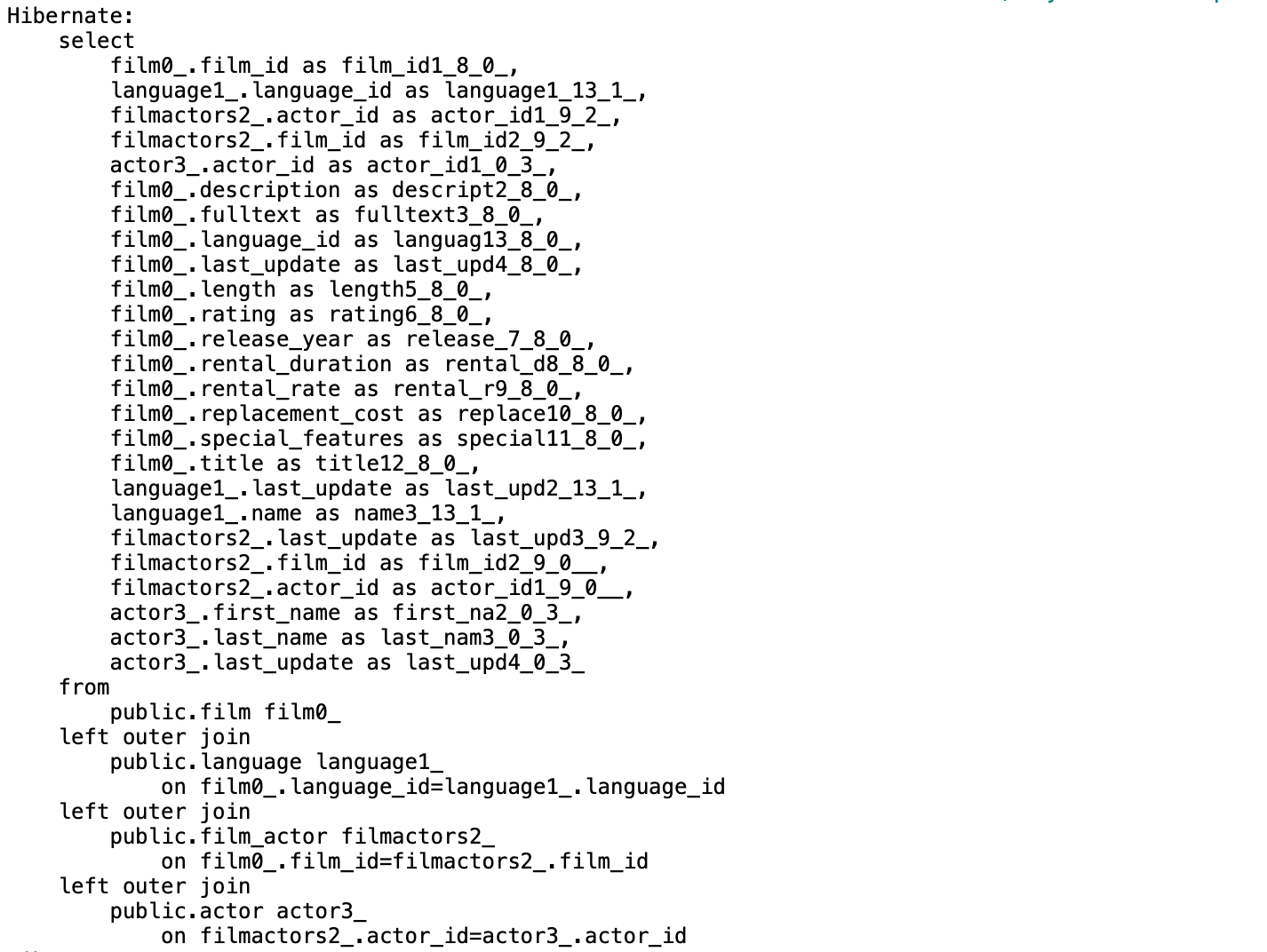

Whenever you use pagination and SQL joins to retrieve entities and their associations to prevent the N+1 select queries problem you’ll most-likely run into this Hibernate’s HHH000104 warning message.

HHH000104: firstResult/maxResults specified with collection fetch; applying in memory!

This warning is bad and will affect your application’s performance once your dataset grows. Let’s see why.

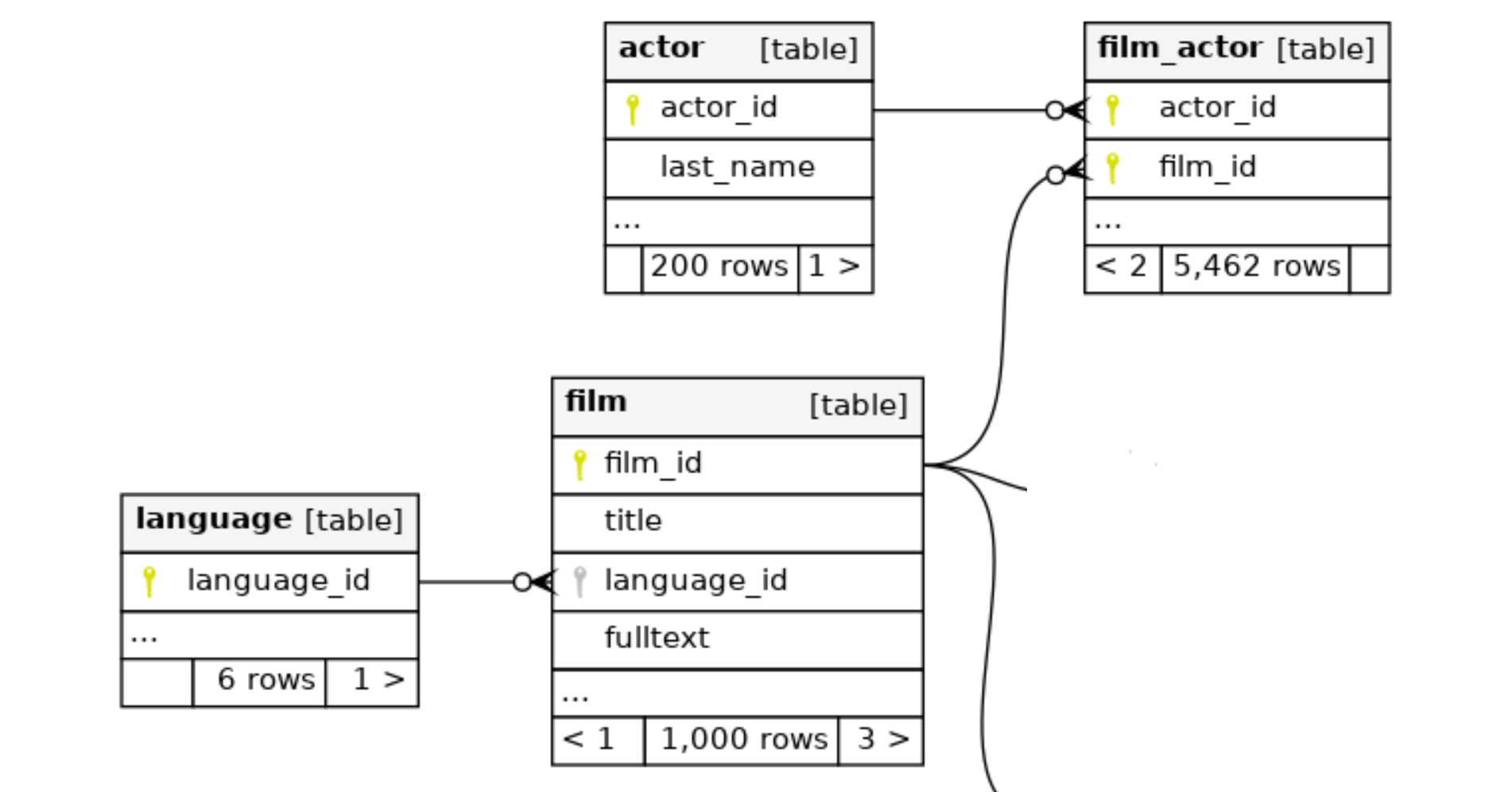

Let’s start with these tables relashionship:

It helps us to write or generate our domain model, and we would endup with these relevant JPA associated entities:

Padding IN predicates using Spring Data JPA Specification

1. OVERVIEW

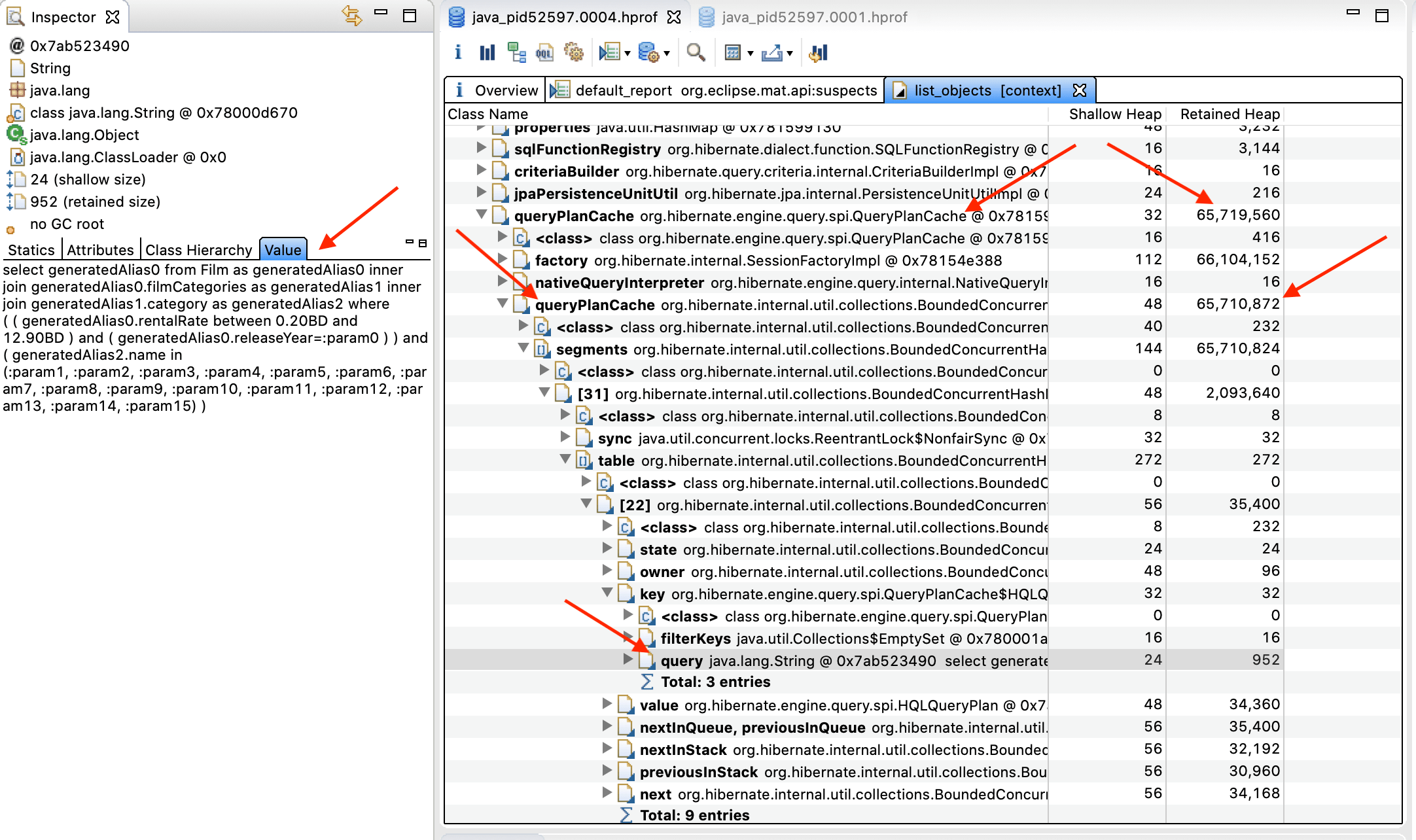

I recently discussed how Spring Data JPA Specification and Criteria queries might impact Hibernate’s QueryPlanCache. A high number of entries in the QueryPlanCache, or a variable number of values in the IN predicates can cause frequent GC cycles where it releases fewer objects over time, and possibly throws OutOfMemoryError exceptions.

While padding the IN predicate parameters to optimize Hibernate’s QueryPlanCache we found setting in_clause_parameter_padding to true didn’t work when using Spring Data JPA Specification.

This blog post helps you to pad IN predicates when writing Spring Data JPA Specification and Criteria queries.

Troubleshooting Spring Data JPA Specification and Criteria queries impact on Hibernate's QueryPlanCache

1. OVERVIEW

Now that you know how to write dynamic SQL queries using Spring Data JPA Specification and the Criteria API, let’s evaluate the impact they might have in the performance of your Spring Boot applications.

As a Java developer, you have the responsibility to understand what SQL statements Hibernate generates and executes. It helps you to prevent the N+1 SELECT query problem, for instance.

Another common problem Hibernate developers experience is performance and memory problems as a result of writing queries with a variable number of values in the IN predicates.

This blog post helps you to identify heap and garbage collection problems you might experience when using Spring Data JPA Specification with Criteria queries.

Documenting your relational database using SchemaSpy

1. OVERVIEW

You joined a new organization, maybe asked to troubleshoot if a Java application has the N+1 select problem or to write new SQL queries.

You started looking at a couple dozen JPA entities and decided to take a look at the RDBMS Entity Relationship diagram. You asked your peers and there is none.

This blog post helps you to document your relational database using SchemaSpy in different ways. Via command line, using a Maven plugin, or using Docker so that you don’t have to install SchemaSpy required software.

Writing dynamic SQL queries using Spring Data JPA Specification and Criteria API

1. OVERVIEW

A dynamic SQL query refers to building a query on the fly before executing it. Not just replacing query parameters by their name or position, but also including a variable number of columns in the WHERE clause, and even joining tables based on specific conditions.

How would you implement a RESTful endpoint like /api/films?minRentalRate=0.5&maxRentalRate=4.99 where you need to retrieve films from a relational database?

You could take advantage of Spring Data JPA support for:

Declared Queriesusing @Query-annotated methods.- Plain JPA

Named Queriesusing:orm.xml’s <named-query /> and <named-native-query />.- @NamedQuery and @NamedNativeQuery annotations.

Derived Queriesfrom method names. For instance, naming a method findByRentalRateBetween() in your Film repository interface.

Let’s say later on you also need to search films based on movie categories, for instance, comedy, action, horror, etc.

You might think a couple of new repository methods would do the work. One for the new category filter and another to combine both, the rental rate range filter with the category filter. You would also need to find out which repository method to call based on the presence of the request parameters.

You soon realize this approach is error-prone and it doesn’t scale as the number of request parameters to search films increases.

This blog post covers generating dynamic SQL queries using Spring Data JPA Specification and Criteria API including joining tables to filter and search data.

Refreshing Feature Flags using Togglz and Spring Cloud Config Server

1. OVERVIEW

Your team decided to hide a requirement implementation behind a feature flag using Spring Boot and Togglz.

Now it’s time to switch the toggle state to make the new implementation available. It might also be possible your team needs to switch the flag back if anything goes wrong.

togglz-spring-boot-starter Spring Boot starter’s autoconfigures an instance of FileBasedStateRepository. This requires you to restart your application after changing a toggle value.

You could configure a different StateRepository implementation such as combining JDBCStateRepository or MongoStateRepository with CachingStateRepository to prevent restarting your application.

This blog post helps you with the configuration and implementation of Togglz feature flags to reload new toggles values using Spring Cloud Config Server and Git.

Preventing N+1 SELECT problem using Spring Data JPA EntityGraph

1. OVERVIEW

What’s the N+1 SELECT problem?

The N+1 SELECT problem happens when an ORM like Hibernate executes one SQL query to retrieve the main entity from a parent-child relationship and then one SQL query for each child object.

The more associated entities you have in your domain model, the more queries will be executed. The more results you get when retrieving a parent entity, the more queries will be executed. This will impact the performance of your application.

This blog post helps you understand what the N+1 SELECT problem is and how to fix it for Spring Boot applications using Spring Data JPA Entity Graph.

Using Azure Blob Storage as your Maven Repository

1. OVERVIEW

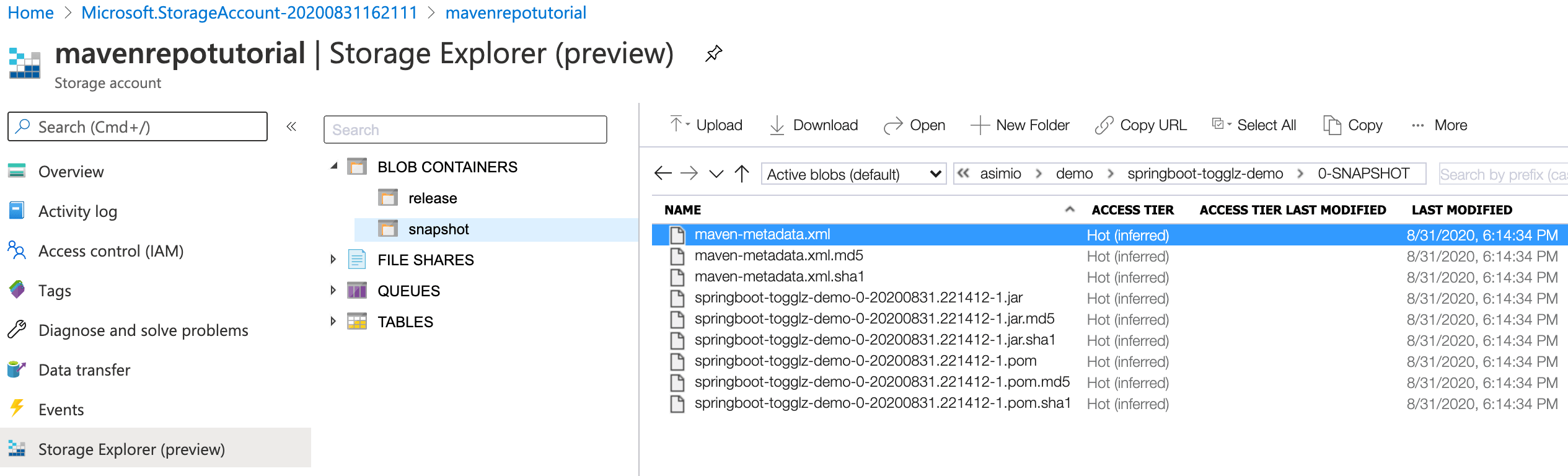

Microsoft Azure Blob Storage is a low-cost option to store your Maven or other binary artifacts. It’s an alternative to feature-rich Maven repository managers like Nexus, Artifactory when you don’t have the resources to install and maintain a server with the required software or the budget to subscribe to a hosted plan.

A choice I wouldn’t recommend is to store your artifacts in the SCM.

This tutorial covers configuring Maven and setting up the Azure Blob Storage infrastructure to deploy your Java artifacts to; as well as the Maven configuration to include them in other applications.

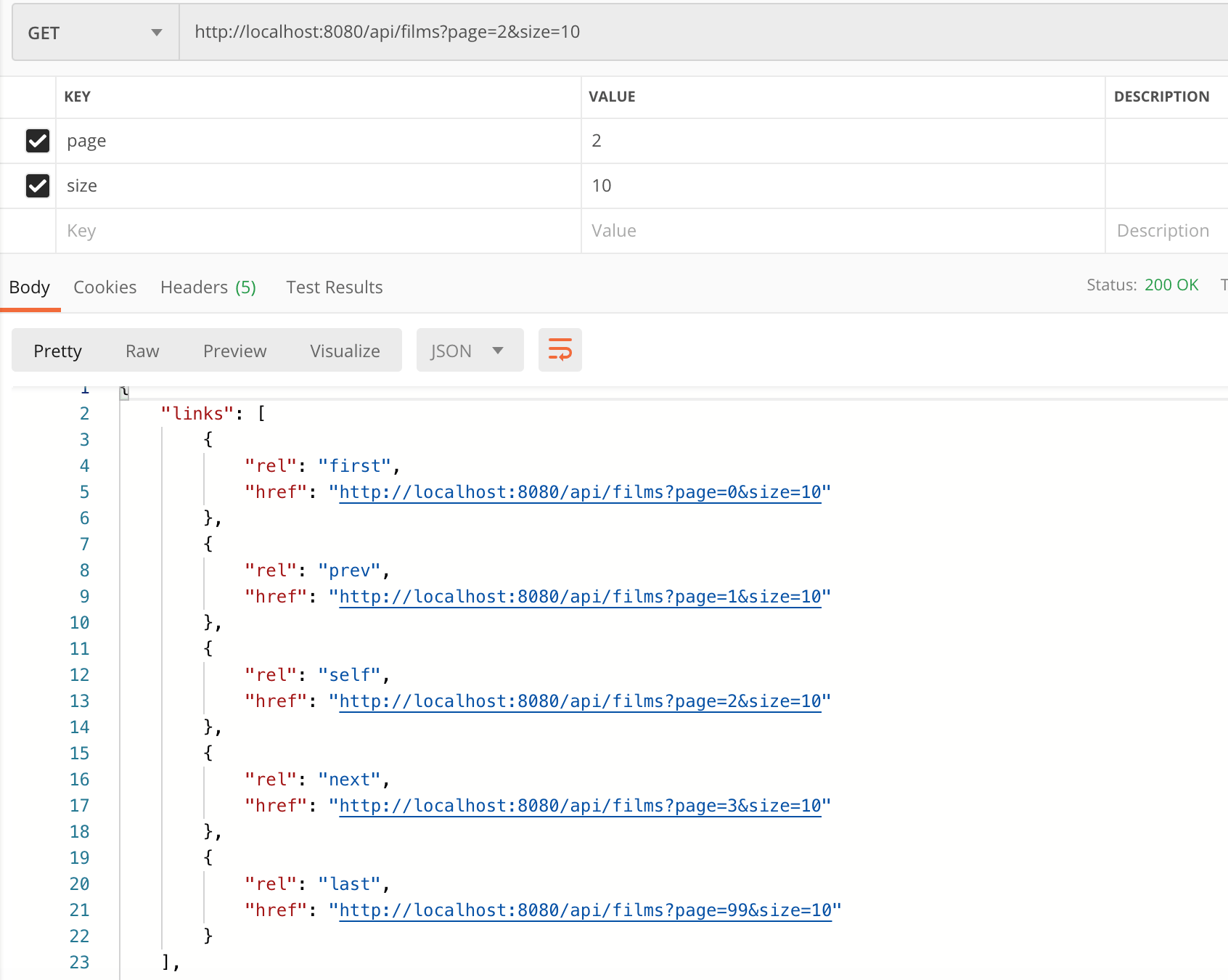

Adding HAL pagination links to RESTful applications using Spring HATEOAS

1. OVERVIEW

Often times API endpoint implementations involve retrieving data from some sort of storage. Retrieving data, even when filtering based on a search criteria might result in hundreds, thousands or millions of records. Retrieving such amount of data could lead to performance issues, not meeting a contracted SLA, ultimately affecting the user experience.

One approach to overcome this problem is to implement pagination. You could retrieve a number of records from a data storage and add pagination links in the API response along with the page metadata back to the client application.

In a previous post, I showed readers how to include HAL hypermedia in Spring Boot RESTful applications using HATEOAS. Adding related links to REST responses help the client applications deciding what they might do next.

Some of the next actions a client application could help a customer do is to navigate through a list of resources. For instance to the first page of a result list.

This is a follow-up blog post to help you adding HAL (Hypertext Application Language) pagination hypermedia to your API responses using Spring Boot 2.1.x.RELEASE and Spring HATEOAS 0.25.x.RELEASE.